We’ve all been there. You already informed the fashion you utilize Fastify. That you wish to have clarifying questions earlier than lengthy responses. That you simply selected the MongoDB uncooked motive force over Mongoose for a selected explanation why. Subsequent consultation, it has no concept and meets you as a complete stranger.

The opposite factor that stricken me about current techniques: they retailer information however now not what to do with them. “Personality does not like being yelled at” is a truth. “Use reactions to higher voices as the principle window into this persona’s internal lifestyles, and let that discomfort form each and every disagreement scene with out declaring it explicitly” is an instruction the fashion can act on with out further inference. Each and every trust in Tenure carries a why_it_matters box that does this conversion at extraction time, when the fashion has the whole conversational context to get it proper.

Maximum reminiscence techniques hand the fashion a pile of vaguely similar information and hope it figures out what is related; as a result of vector seek cannot inform your MongoDB determination except your Redis determination except your TypeScript personal tastes. All of them reside in the similar semantic community. Tenure retrieves the precise trust that applies to the present second.

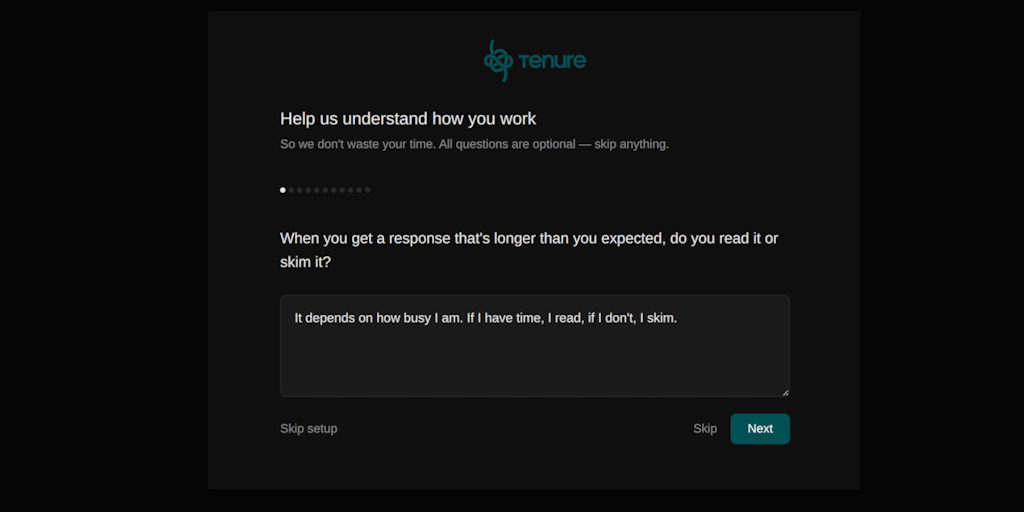

Tenure is a local-first, privacy-focused proxy that sits between your shopper and any LLM. Subsequent consultation, it already is aware of your stack, your personal tastes, and what you dominated out. It does not meet you as a stranger.