For anyone who strayed clear of the rest AI-related for a very long time, webhosting my very own massive language fashions made me acutely aware of how productive native fashions will also be. Whether or not it’s assisting my troubleshooting efforts after a botched venture renders my house lab offline, extracting exact textual content snippets from abysmally lengthy paperwork, or serving to me arrange my bookmarks, native LLMs are actually a staple a part of my FOSS arsenal.

Plus, the extra I exploit LLMs, the extra I’ve grown to comprehend the sheer selection of fashions at my disposal. Slightly than locking myself right into a proprietary LLM ecosystem or being compelled to choose from an identical fashions skilled with the similar algorithms, my self-hosted Ollama, LM Studio, and (most significantly) llama.cpp setups let switch LLMs relying at the workloads. Now not simply other parameters, thoughts you. I’m speaking about totally other multimodal features, knowledge inputs, and machine-learning algorithms – and it’s via a long way probably the most helpful side of native fashions in 2026.

Now not all LLMs are created equivalent

The “easiest LLM” is dependent totally for your use case

Should you lurk in AI-centric boards, you’ve almost definitely come throughout posts highlighting particular LLMs as the following easiest factor since sliced bread. And neatly, they aren’t unsuitable. Regardless of their decrease computing prowess in comparison to their cloud opposite numbers, native LLMs are flexible sufficient to procedure queries over a variety of subjects, much more so while you get started taking a look at high-parameter fashions.

However while you get started the usage of them broadly, it’s possible you’ll come upon sure fashions generating unsatisfactory ends up in some scenarios. That wouldn’t be a subject matter if no longer for the truth that the similar LLM plays extremely neatly in different duties. A large number of those inconsistencies will also be attributed to the algorithms, coaching knowledge, quantization charges, and tuning strategies that formed those LLMs.

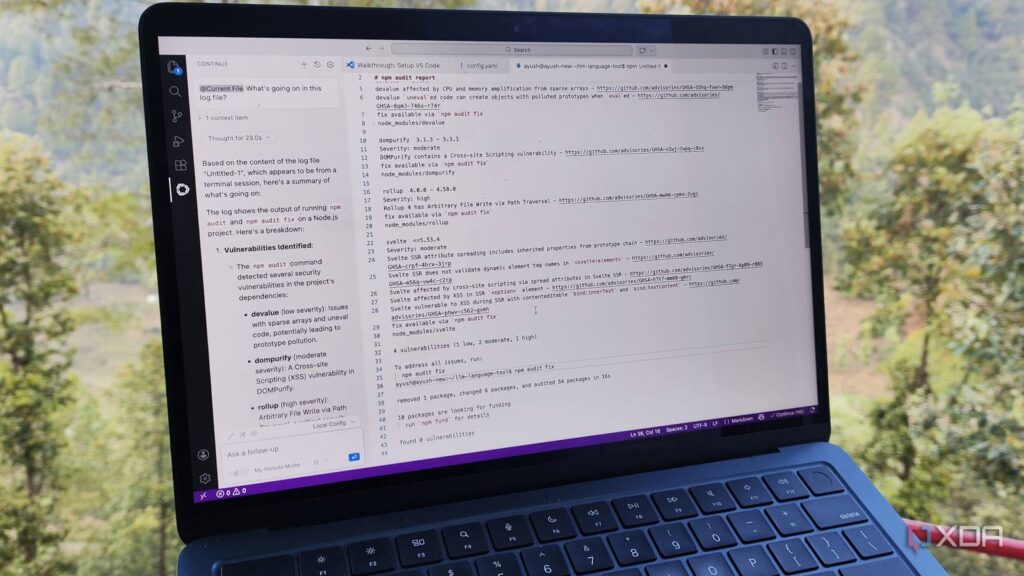

For instance, Qwen 2.5 Coder (the upper parameter variants) and GPT-OSS are regarded as the creme de los angeles creme for programming workloads, whilst the DeepSeek lineup is generally the most well liked choice for workloads requiring a large number of reasoning at the clanker’s aspect. This, in flip, hugely influences the type’s application in standard productiveness duties. For instance, I’ve had the most efficient good fortune the usage of Llama 3.1 for processing lengthy notes and querying them for analysis on Open Pocket book, whilst Qwen 2.5 Coder works in reality neatly for autocomplete ideas on VS Code. In the meantime, Qwen 3.5 and DeepSeek are higher for calling equipment on exterior apps (specifically, my NAS server, Nextcloud example, and House Assistant hub) by the use of MCP servers.

I constructed a neighborhood LLM server I will be able to get right of entry to from anyplace, and it makes use of a Raspberry Pi

It won’t change ChatGPT, however it is excellent sufficient for edge initiatives

That’s prior to you come with the parameter measurement and multimodal features

Even if the educational knowledge issues fairly a bit of, the parameter measurement is every other key issue that determines an LLM’s computational firepower. For instance, once I attempted to make use of conversational language when invoking equipment by the use of 1B fashions, they’d continuously hallucinate or throw mistakes. Switching to 9B and better fashions removed this drawback, as they have got way more parameters to grasp deeper patterns, despite the fact that they arrive with the next efficiency tax, particularly on outdated shopper {hardware} corresponding to mine.

Heck, even with the similar parameter measurement, two LLMs can fluctuate in the kind of knowledge they are able to procedure. Multimodal LLMs can combine textual content, movies, photographs, or even one thing as quirky as sensor feeds, whilst more practical fashions can fail to realize the rest previous easy paperwork. Heck, the native AI ecosystem even has some ultra-efficient embedding fashions whose sole objective is to map standard paperwork into vector areas, thereby making it more straightforward to retrieve knowledge.

Switching between LLMs allows you to harness their distinctive features

And you’ll be able to optimize your LLM workloads to check your GPU specifications

If I had been to keep on with a selected type circle of relatives, I’d be considerably bottlenecking the opportunity of my native LLM servers. So, I generally tend to make use of a number of LLMs in my workflow, and cycle between them relying on my wishes.

Take my Paperless-GPT textual content extraction pipeline, for instance. Since minicpm-v is a vision-capable type, it’s extremely correct at spotting textual content in photographs and PDF paperwork. Making an attempt to make use of Llama 3.1 in particular for textual content extraction can be futile, although it’s much better for general-purpose conversational duties.

Likewise, I depend on my Qwen 3.5 (9B) type when teaching my MCP-powered equipment, as I don’t need them to hallucinate whilst I’m looking to configure a handy guide a rough automation workflow. For my coding workloads (and no, I don’t use clankers to generate apps), I generally tend to move with even upper parameter fashions. Alternatively, the 20B fashions are too massive to run on the rest however my RTX 3080 Ti (or even then, they require further tweaks). So, I depend at the easy 3B LLMs and embedding fashions hosted on my GTX 1080 for easy bookmark, report, and notice tagging as an alternative.

Truly, the name of the game to a productive native LLM setup isn’t staying dependable to a selected circle of relatives; it’s swapping between a number of fashions relying at the activity and the features of the underlying {hardware}.