Information powers how techniques be informed, merchandise evolve, and the way firms make possible choices. However getting solutions temporarily, appropriately, and with the best context is steadily more difficult than it must be. To make this more straightforward as OpenAI scales, we constructed our personal bespoke in-house AI information agent that explores and causes over our personal platform.

Our information agent shall we staff move from query to perception in mins, now not days. This lowers the bar to pulling information and nuanced evaluation throughout all purposes, now not simply by our information workforce. These days, groups throughout Engineering, Information Science, Cross-To-Marketplace, Finance, and Analysis at OpenAI lean at the agent to respond to high-impact information questions. As an example, it could possibly lend a hand reply easy methods to review launches and perceive industry well being, all over the intuitive structure of herbal language. The agent combines Codex-powered table-level wisdom with product and organizational context. Its regularly studying reminiscence device approach it additionally improves with each and every flip.

On this put up, we’ll damage down why we wanted a bespoke AI information agent, what makes its code-enriched information context and self-learning so helpful, and courses we realized alongside the best way.

OpenAI’s information platform serves greater than 3.5k inside customers operating throughout Engineering, Product, and Analysis, spanning over 600 petabytes of information throughout 70k datasets. At that measurement, merely discovering the best desk can also be probably the most time-consuming portions of doing evaluation.

As one inside consumer put it:

“We’ve a large number of tables which can be reasonably an identical, and I spend lots of time making an attempt to determine how they’re other and which to make use of. Some come with logged-out customers, some don’t. Some have overlapping fields; it’s onerous to inform what’s what.”

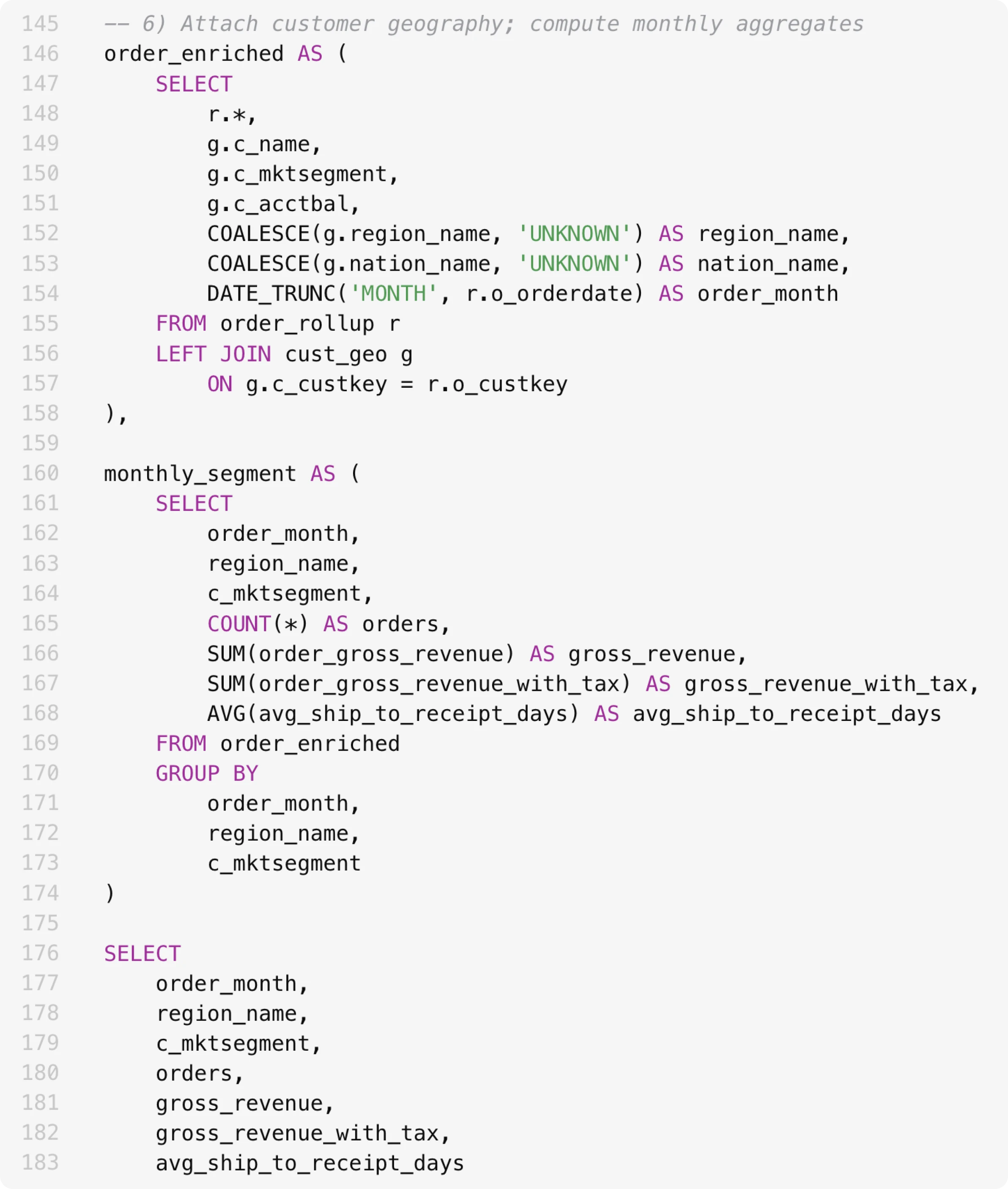

Even with the proper tables decided on, generating right kind effects can also be difficult. Analysts should reason why about desk information and desk relationships to verify transformations and filters are implemented appropriately. Commonplace failure modes—many-to-many joins, clear out pushdown mistakes, and unhandled nulls—can silently invalidate effects. At OpenAI’s scale, analysts must now not need to sink time into debugging SQL semantics or question efficiency: their focal point must be on defining metrics, validating assumptions, and making data-driven selections.

This SQL observation is 180+ traces lengthy. It’s now not simple to understand if we’re becoming a member of the best tables and querying the best columns.

Let’s stroll by way of what our agent is, the way it curates context, and the way it helps to keep self-improving.

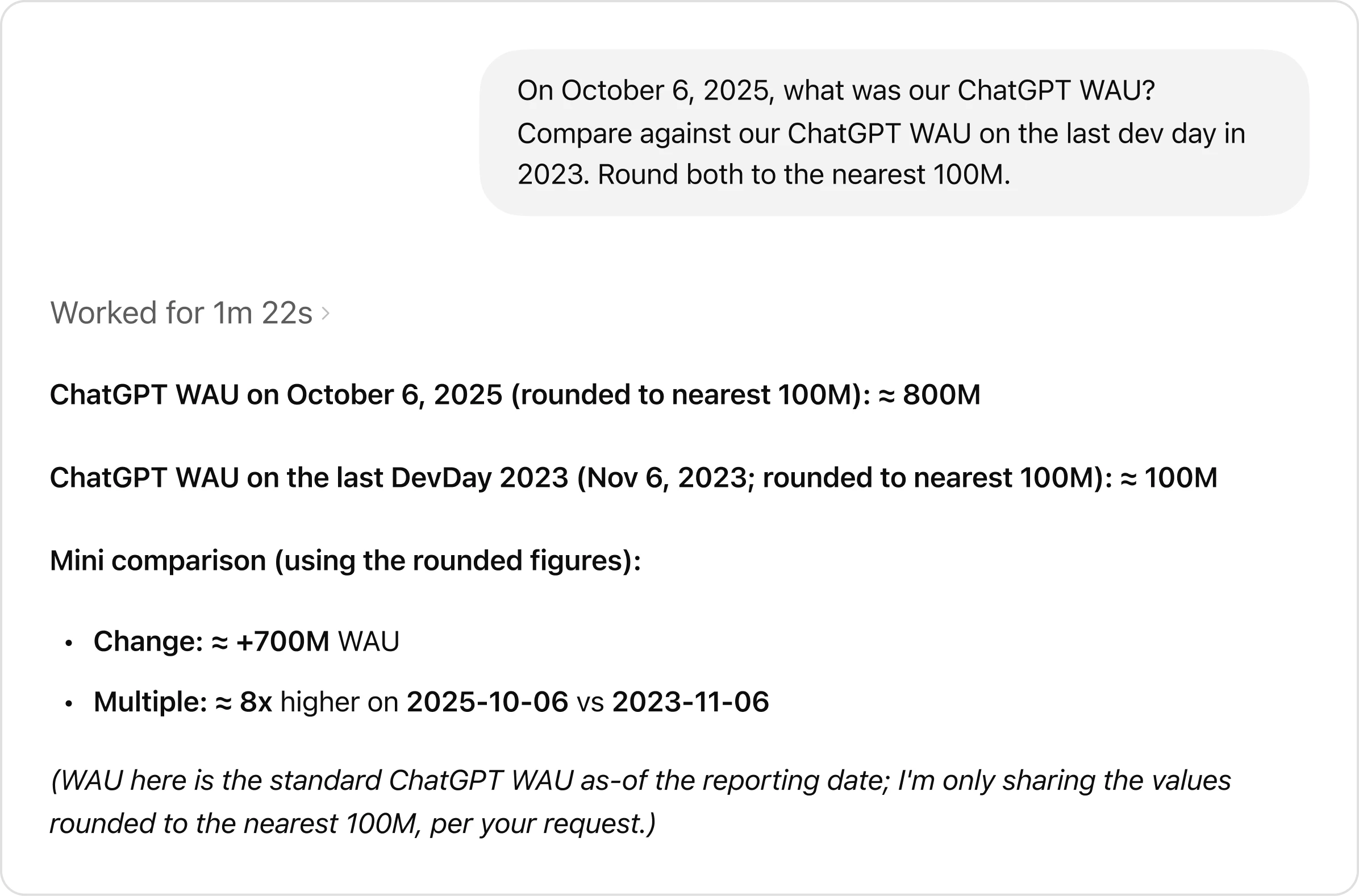

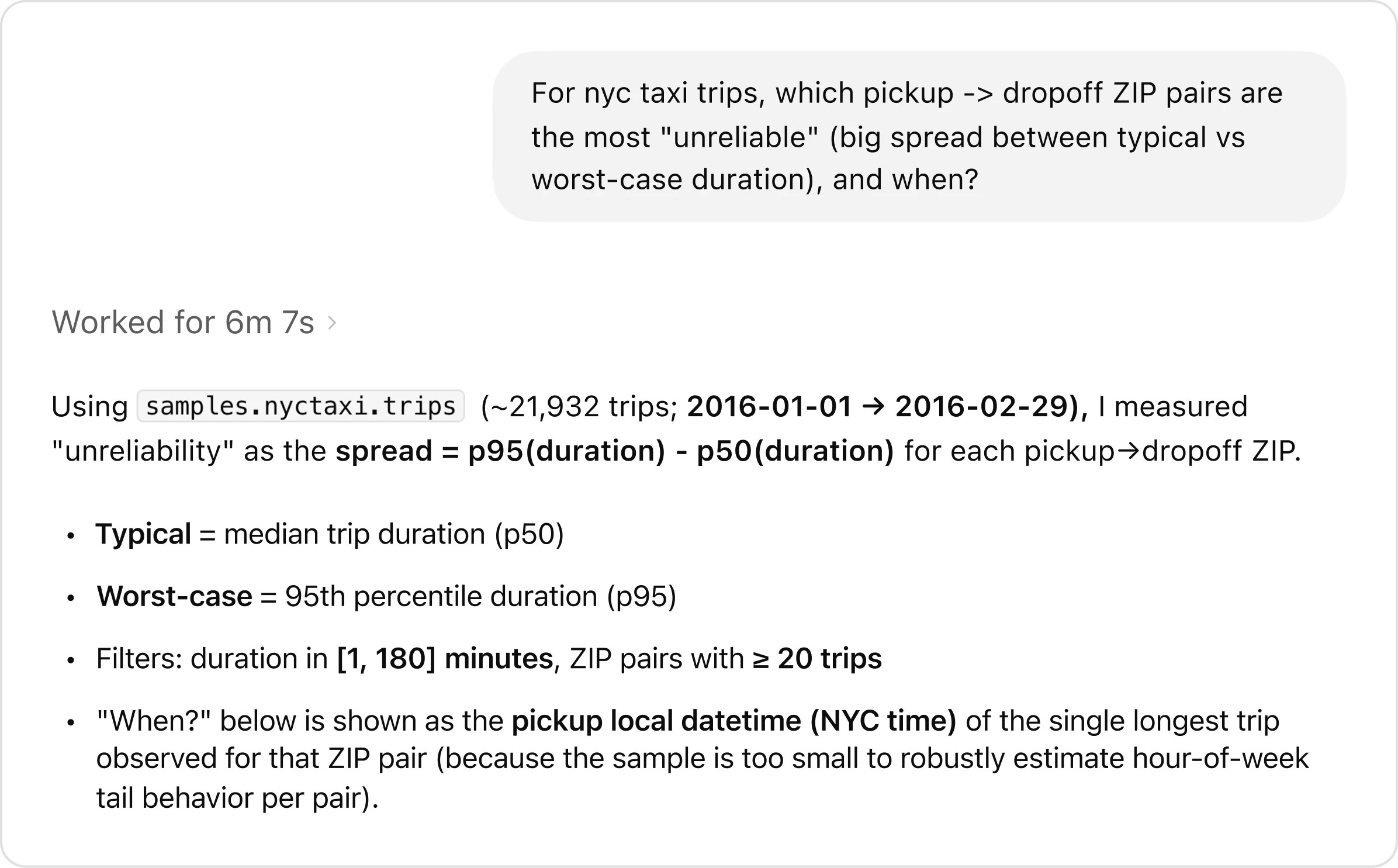

Customers can ask advanced, open-ended questions which might most often require more than one rounds of handbook exploration. Take this case recommended, which makes use of a check information set: “For NYC taxi journeys, which pickup-to-dropoff ZIP pairs are probably the most unreliable, with the biggest hole between conventional and worst-case commute occasions, and when does that variability happen?”

The agent handles the evaluation end-to-end, from figuring out the query to exploring the knowledge, working queries, and synthesizing findings.

The agent’s reaction to the query.

Some of the agent’s superpowers is the way it causes by way of issues. Quite than following a set script, the agent evaluates its personal development. If an intermediate consequence appears to be like mistaken (e.g., if it has 0 rows because of an mistaken sign up for or clear out), the agent investigates what went mistaken, adjusts its manner, and tries once more. Right through this procedure, it keeps complete context, and carries learnings ahead between steps. This closed-loop, self-learning procedure shifts iteration from the consumer into the agent itself, enabling sooner effects and constantly higher-quality analyses than handbook workflows.

The agent’s reasoning to spot probably the most unreliable NYC taxi pickup–dropoff pairs.

The agent covers the entire analytics workflow: finding information, working SQL, and publishing notebooks and reviews. It understands inside corporate wisdom, can internet seek for exterior data, and improves over the years by way of realized utilization and reminiscence.

Top quality solutions rely on wealthy, correct context. With out context, even robust fashions can produce mistaken effects, comparable to massively misestimating consumer counts or misinterpreting inside terminology.

The agent with out reminiscence, not able to question successfully.

The agent’s reminiscence permits sooner queries through finding the proper tables.

To steer clear of those failure modes, the agent is constructed round more than one layers of context that floor it in OpenAI’s information and institutional wisdom.

- Metadata grounding: The agent will depend on schema metadata (column names and information sorts) to tell SQL writing and makes use of desk lineage (e.g., upstream and downstream desk relationships) to supply context on how other tables relate.

- Question inference: Consuming historic queries is helping the agent know how to write down its personal queries and which tables are most often joined in combination.

- Curated descriptions of tables and columns equipped through area mavens, taking pictures intent, semantics, industry that means, and recognized caveats that don’t seem to be simply inferred from schemas or previous queries.

Metadata on my own isn’t sufficient. To truly inform tables aside, you want to know how they have been created and the place they originate.

- By way of deriving a code-level definition of a desk, the agent builds a deeper figuring out of what the knowledge in fact comprises.

- Nuances on what’s saved within the desk and the way it’s derived from an analytics match supplies further data. As an example, it can provide context at the area of expertise of values, how steadily the desk information is up to date, the scope of the knowledge (e.g., if the desk excludes positive fields, it has this point of granularity), and so on.

- This offers enhanced utilization context through appearing how the desk is used past SQL in Spark, Python, and different information techniques.

- Which means the agent can distinguish between tables that glance an identical however fluctuate in important tactics. As an example, it could possibly inform whether or not a desk most effective contains first-party ChatGPT visitors. This context may be refreshed mechanically, so it remains up to the moment with out handbook upkeep.

- The agent can entry Slack, Google Medical doctors, and Perception, which seize important corporate context comparable to launches, reliability incidents, inside codenames and gear, and the canonical definitions and computation common sense for key metrics.

- Those paperwork are ingested, embedded, and saved with metadata and permissions. A retrieval provider handles entry management and caching at runtime, enabling the agent to successfully and safely pull on this data.

- When the agent is given corrections or discovers nuances about positive information questions, it is in a position to save lots of those learnings for subsequent time, permitting it to repeatedly fortify with its customers.

- In consequence, long term solutions start from a extra correct baseline fairly than again and again encountering the similar problems.

- The purpose of reminiscence is to retain and reuse non-obvious corrections, filters, and constraints which can be important for information correctness however tricky to deduce from the opposite layers on my own.

- As an example, in a single case, the agent didn’t understand how to clear out for a specific analytics experiment (it trusted matching in opposition to a particular string outlined in an experiment gate). Reminiscence used to be crucially necessary right here to verify it used to be in a position to clear out appropriately, as a substitute of fuzzily seeking to string fit.

- While you give the agent a correction or when it reveals a studying out of your dialog, it is going to recommended you to save lots of that reminiscence for subsequent time.

- Recollections will also be manually created and edited through customers.

- Recollections are scoped on the international and private point, and the agent’s tooling makes it simple to edit them.

- When no prior context exists for a desk or when present data is stale, the agent can factor reside queries to the knowledge warehouse to check out and question the desk at once. This permits it to validate schemas, perceive the knowledge in real-time, and reply accordingly.

- The agent may be in a position to speak to different Information Platform techniques (metadata provider, Airflow, Spark) as had to get broader information context that exists outdoor the warehouse.

We run a day by day offline pipeline that aggregates desk utilization, human annotations, and Codex-derived enrichment right into a unmarried, normalized illustration. This enriched context is then transformed into embeddings the usage of the OpenAI embeddings API(opens in a brand new window) and saved for retrieval. At question time, the agent pulls most effective probably the most related embedded context by means of retrieval-augmented technology(opens in a brand new window) (RAG) as a substitute of scanning uncooked metadata or logs. This makes desk figuring out speedy and scalable, even throughout tens of hundreds of tables, whilst protecting runtime latency predictable and coffee. Runtime queries are issued to our information warehouse reside as wanted.

In combination, those layers be sure that the agent’s reasoning is grounded in OpenAI’s information, code, and institutional wisdom, dramatically decreasing mistakes and getting better reply high quality.

One-shot solutions paintings when the issue is obvious, however maximum questions aren’t. Extra steadily, arriving at the proper consequence calls for back-and-forth refinement and a few path correction.

The agent is constructed to act like a teammate you’ll reason why with. It’s a conversational, always-on and handles each fast solutions and iterative exploration.

It carries over whole context throughout turns, so customers can ask follow-up questions, modify their intent, or exchange path with out restating the entirety. If the agent begins heading down the mistaken trail, customers can interrupt mid-analysis and redirect it, similar to operating with a human collaborator who listens as a substitute of plowing forward.

When directions are unclear or incomplete, the agent proactively asks clarifying questions. If no reaction is equipped, it applies good defaults to make development. As an example, if a consumer asks about industry expansion without a date vary specified, it’ll suppose the closing seven or 30 days. Those priors permit it to stick responsive and non-blocking whilst nonetheless converging at the proper consequence.

The result’s an agent that works neatly each whilst you know precisely what you wish to have (e.g., “Inform me about this desk”) and simply as robust whilst you’re exploring (e.g., “I’m seeing a dip right here, are we able to damage this down through buyer sort and time-frame?”).

After rollout, we noticed that customers often ran the similar analyses for regimen repetitive paintings. To expedite this, the agent’s workflows bundle ordinary analyses into reusable instruction units. Examples come with workflows for weekly industry reviews and desk validations. By way of encoding context and absolute best practices as soon as, workflows streamline repeat analyses and make sure constant effects throughout customers.

Development an always-on, evolving agent approach high quality can float simply as simply as it could possibly fortify. With no tight comments loop, regressions are inevitable and invisible. The one technique to scale capacity with out breaking agree with is thru systematic analysis.

Its Evals are constructed on curated units of question-answer pairs. Every query objectives crucial metric or analytical development we care deeply about getting proper, paired with a manually authored “golden” SQL question that produces the predicted consequence. For every eval, we ship the herbal language query to its query-generation endpoint, execute the generated SQL, and evaluate the output in opposition to the results of the predicted SQL.

Analysis doesn’t depend on naive string matching. Generated SQL can fluctuate syntactically whilst nonetheless being right kind, and consequence units might come with further columns that don’t materially have an effect on the solution. To account for this, we evaluate each the SQL and the ensuing information, and feed those alerts into OpenAI’s Evals grader. The grader produces a last rating at the side of an evidence, taking pictures each correctness and applicable variation.

Those evals are like unit checks that run regularly all the way through building to spot regressions as canaries in manufacturing; this permits us to catch problems early and hopefully iterate because the agent’s features amplify.

Our agent plugs at once into OpenAI’s present safety and access-control fashion. It operates purely as an interface layer, inheriting and imposing the similar permissions and guardrails that govern OpenAI’s information.

All the agent’s entry is precisely pass-through, that means customers can most effective question tables they have already got permission to entry. When entry is lacking, it flags this or falls again to selection datasets the consumer is allowed to make use of.

After all, it is constructed for transparency. Like several device, it could possibly make errors. It exposes its reasoning procedure through summarizing assumptions and execution steps along every reply. When queries are done, it hyperlinks at once to the underlying effects, permitting customers to check out uncooked information and examine each and every step of the evaluation.

Development our agent from scratch surfaced sensible courses about how brokers behave, the place they try, and what in fact makes them dependable at scale.

Early on, we uncovered our complete instrument set to the agent, and temporarily bumped into issues of overlapping capability. Whilst this redundancy can also be useful for particular customized circumstances and is extra apparent to a human when manually invoking, it’s complicated to brokers. To cut back ambiguity and fortify reliability, we limited and consolidated positive instrument calls.

We additionally came upon that extremely prescriptive prompting degraded effects. Whilst many questions percentage a basic analytical form, the main points range sufficient that inflexible directions steadily driven the agent down mistaken paths. By way of transferring to higher-level steerage and depending on GPT‑5’s reasoning to select the precise execution trail, the agent was extra powerful and produced higher effects.

Schemas and question historical past describe a desk’s form and utilization, however its true that means lives within the code that produces it. Pipeline common sense captures assumptions, freshness promises, and industry intent that by no means floor in SQL or metadata. By way of crawling the codebase with Codex, our agent understands how datasets are in fact built and is in a position to higher reason why about what every desk in fact comprises. It could reply “what’s in right here” and “when can I exploit it” way more correctly than from warehouse alerts on my own.

We’re repeatedly operating to fortify our agent through expanding its skill to maintain ambiguous questions, getting better its reliability and accuracy with more potent validations, and integrating it extra deeply into workflows. We imagine it must mix naturally into how folks already paintings, as a substitute of functioning like a separate instrument.

Whilst our tooling will stay profiting from underlying enhancements in agent reasoning, validation, and self-correction, our workforce’s challenge stays the similar: seamlessly ship speedy, faithful information evaluation throughout OpenAI’s information ecosystem.