In the event you’ve spent any time within the native LLM area, you might be virtually for sure accustomed to the {hardware} ceiling. Probably the most fascinating open-source fashions stay getting larger, and the space between what is revealed on Hugging Face and what you’ll be able to in fact load into VRAM at house has in most cases been rising, sans the handful of releases a yr that run on anything else and are in reality spectacular. Certain, you’ll be able to obtain a 230B mixture-of-experts mannequin without cost, however it isn’t unfastened to run. You want a workstation that prices up to a automotive, or even then, you might be continuously quantizing the item into oblivion simply to suit it.

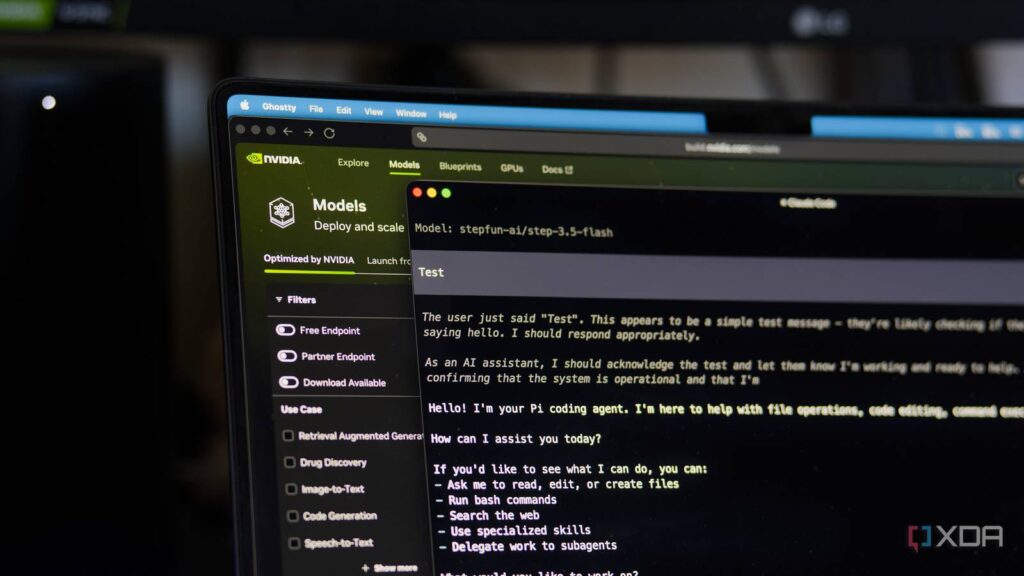

That is why I have been testing Nvidia’s hosted endpoints at the Construct Nvidia web site. It isn’t a brand new platform, however the lineup of fashions has quietly grown into one thing I feel numerous other people have ignored. You join the developer program, generate an API key, and also you get unfastened get admission to to dozens of the biggest open-source fashions in the market, served from Nvidia’s personal DGX Cloud {hardware}. Now not each mannequin within the catalog is at the unfastened tier, and a couple of are flagged for upcoming deprecation, however the number of unfastened fashions is vast sufficient to hide maximum of what you’ll in fact need to check out. You do not want to put up a bank card, there is no per-token billing, and extra importantly, there is no GPU price that it’s a must to pay your self.

There are possibly price limits someplace, and you’ve got to accept as true with Nvidia with the request, nevertheless it works. I have been the use of it via my very own coding harness for weeks now to check fashions the general public cannot ever run at house, and it is strangely just right.

The lineup is a long way larger than other people give it credit score for

Lots of the fashions I in fact need to check out are already there

The catalog runs to over 100 fashions at this level, with 50 of them (on the time of writing) wearing the “Unfastened Endpoint” tag, however the rely does not topic up to the curation does. For instance, MiniMax M2.7 is there, which I have in fact examined prior to now with a MiniMax subscription for the reason that it is certainly one of Claude’s maximum credible open-weight competition. So is Step-3.5 Flash, the Stepfun mannequin that I cherished at the Lenovo ThinkStation PGX. Nvidia’s personal Nemotron circle of relatives is there too, a suite of reasoning and agent-focused open fashions that Nvidia has been refining as a exhibit for what its personal coaching stack can do.

As for probably the most different fashions, GLM-4.7 arrived just lately, despite the fact that it is nowhere close to as tough for coding as GLM-5.1. Kimi K2 Pondering, Qwen3-Coder-480B, DeepSeek V3.2, Llama 4 Maverick, Mistral Massive 3, Devstral 2, ByteDance’s Seed-OSS, and Google’s Gemma 3 circle of relatives are all in there too, so there may be deep selection in relation to what you’ll be able to check out. A handful of the older Mistral and DeepSeek entries raise deprecation notices, so the lineup is not static, however new unfastened endpoints had been touchdown sooner than previous ones go away.

The most efficient phase about that is that each one of those fashions are large. MiniMax M2.7 is a 230 billion parameter sparse MoE with 10B energetic in line with token, and Step-3.5 Flash is a 196B mannequin with 11B energetic and a 256K context window. Those are in fact usable fashions that may energy actual paintings, however they are additionally the type of fashions that desire a critical server to host. There are some obstacles in comparison to operating it in the community, with essentially the most notable being that those are simplest inference endpoints, so you’ll be able to’t fine-tune or alter a mannequin. What Nvidia hosts is what you get, however for the type of checking out and analysis paintings other people may need to do, it is greater than just right sufficient.

Those fashions are nonetheless open-weight fashions, and you’ll be able to nonetheless pull them from Hugging Face, run them by yourself {hardware} you probably have it, and fine-tune them beneath no matter license they send with. Nvidia’s webhosting is only a comfort layer on best that permits you to “check out before you purchase” in a way. In contrast to when a brand new GPT model releases, it is OpenAI that hosts a mannequin, and that is the reason the one position the mannequin exists. When Nvidia hosts MiniMax M2.7, the weights are revealed, the structure is documented, and the one factor you might be in point of fact paying for, should you ultimately come to a decision to self-host it, is the GPU energy to run it. There is not any model of this actual deal the place the unfastened tier locks you in.

Setup is mainly an OpenAI-compatible endpoint

In case your device speaks OpenAI, it speaks Nvidia

The entire thing is constructed round an OpenAI-compatible API, which makes it trivially simple to drop into present tooling. You join at construct.nvidia.com with the unfastened Nvidia Developer account, you generate an API key prefixed with “nvapi-“, and that is the reason about it. The bottom URL is “https://combine.api.nvidia.com/v1” and the remainder of the calls glance precisely like what you’ll ship to any OpenAI-compatible provider.

In observe, that suggests configuring one thing like this for your coding harness:

"nvidia-build": {

"baseUrl": "https://combine.api.nvidia.com/v1",

"api": "openai-completions",

"apiKey": "nvapi-XXXXXXXXXXX",

"fashions": [

{

"id": "stepfun-ai/step-3.5-flash",

"contextWindow": 200000

},

{

"id": "minimaxai/minimax-m2.7",

"contextWindow": 200000

}

]

}After that, if you wish to exchange the mannequin, you simply exchange the identity or mannequin identify to be no matter’s at the catalog web page. Software calling has labored cleanly in the whole thing I have thrown at it thus far, together with the agentic stuff like MiniMax M2.7 and Step-3.5 Flash.

I run those fashions via Pi, however some other coding harness in a position to the use of OpenAI-style completions, equivalent to OpenCode, will paintings as smartly. This is identical harness I have pointed at MiniMax’s personal API, GLM-5.1, and a few of my self-hosted fashions. Swapping in Nvidia’s endpoint is a base URL exchange and an API key, not anything extra. In case you are already the use of one thing like Aider, Proceed, or some other device that permits you to specify a customized OpenAI-compatible backend, the configuration takes a few minute.

There may be, in idea, a price restrict it’s a must to reside with at the unfastened tier, however I have not run into one but throughout weeks of standard use, and Nvidia does not submit one. For interactive coding paintings or one-off analysis runs, no matter ceiling exists hasn’t been one thing I have come throughout. You are now not going to make use of this to serve a manufacturing app as a result of it may be slightly gradual (particularly at top occasions), and that is the reason high-quality. That is not what it is for.

Nvidia’s unfastened tier used to paintings on a credit score device, the place you’ll get an allotment while you signed up and may request extra should you wanted them, and that device has quietly developed during the last yr. The present state, as of this April, is kind of a forever-free plan with price limits as the primary constraint, fairly than per-token credit. There may be nonetheless a credit score pool tied to the developer program for probably the most better or extra specialised fashions, however for the headline open-weight stuff, throughput is the one factor you in point of fact run up in opposition to. I am getting why Nvidia runs it this fashion, as they promote the GPUs those fashions run on, and this in point of fact serves as a exhibit or a gross sales funnel. It simply occurs to even be helpful to someone who simply needs to check out a considerably better mannequin than their {hardware} permits for.

There are different puts that may hire you get admission to to the similar fashions, after all, they usually may not have the similar throughput issues. OpenRouter aggregates numerous those similar open-weight fashions at the back of a unmarried billing account, Groq runs a smaller curated set by itself LPU silicon at speeds maximum GPU stacks battle to check, and In combination AI has been operating an open-model API for years. What Nvidia has, that the others do not slightly, is the mix of an evident {hardware} fit for the way those fashions are deployed in manufacturing, a unfastened tier beneficiant sufficient to be helpful, and a curated catalog that has a tendency to pick out up new releases just about the day they drop. None of the ones on my own makes it the most obvious select, however in combination, they make it extraordinarily attractive.

Hosted endpoints do not substitute native, they lengthen what you’ll be able to take a look at

Working 196B at house remains to be a non-starter for the general public

I run native LLMs day by day, and I have been lovely open that for the type of paintings I in fact do, the smaller fashions are in most cases high-quality. Qwen3-Coder-Subsequent alone {hardware} remains to be my go-to for anything else the place privateness issues or the place I don’t need a community dependency within the loop. Native all the time wins when native is just right sufficient.

However the issue is that native is not all the time just right sufficient, and the space presentations up precisely on the mannequin sizes Nvidia is webhosting. There is not any reasonable approach for me to run MiniMax M2.7 at the ThinkStation PGX. The overall mannequin wishes critical {hardware}, and the use of the 3-bit quantization to run it on that system strips away sufficient of its intelligence that I am not in fact checking out the similar mannequin anymore. If I need to know the way M2.7 in fact plays on a role I care about, I would like it served at complete precision. Nvidia’s endpoint is one of the simplest ways to get that with out paying MiniMax at once or putting in an H100 cluster.

That is essentially the most sensible approach to make use of the unfastened API, as it is a strategy to review the biggest open-weight fashions in truth, in opposition to the similar activates and duties I throw at my native stack, with no need to faux that the closely quantized mannequin is the true factor. I have used it to mess around with Step-3.5 Flash with no need to fret about an out of reminiscence error, and I have performed round with different fashions I would not usually be afflicted to configure in the community. As smartly, I found out Nvidia’s unfastened platform once I began checking out out MiniMax, however I would have simply examined it on Nvidia’s API first earlier than taking the plunge at the base per month subscription.

There may be one crew of other people that is particularly helpful to, and it is the other people pricing up an Nvidia DGX Spark, a high-VRAM workstation, or a Mac Studio for native AI paintings. In case you are about to spend a couple of thousand greenbacks on a field for the specific objective of operating those fashions, doing it with out first making an attempt the true fashions on Nvidia’s unfastened endpoints is a mistake. You’ll be able to spend a weekend operating Step-3.5 Flash, MiniMax M2.7, and a couple of Nemotron variants via your individual actual workflows, see which of them in fact are compatible how you’re employed, after which dimension your {hardware} round that solution as an alternative. That is a purchasing choice you’ll be able to’t undo affordably, however checking out it’s without cost.

The one problem is that not anything this is personal. You are sending your activates to Nvidia’s infrastructure, utilization is logged, and fashions each and every have their very own knowledge insurance policies. For analysis and exploratory paintings that is totally high-quality, however for anything else that touches actual knowledge or anything else you’ll believe delicate, it is the unsuitable device.

Unfastened get admission to to frontier-scale open weights is a larger deal than it sounds

Greater than sufficient for what the general public in fact want

The previous couple of years of native AI have in large part been a tale of artful compression with out decreasing output high quality and of discovering tactics to run smaller and smaller variations of larger and larger fashions. Alternatively, the brand new architectures and the functions of those fashions that may in reality catch you off guard are those that arrive at a scales that does not are compatible on house {hardware}.

A unfastened, OpenAI-compatible endpoint serving the full-precision weights of the ones open fashions, hosted via the corporate that makes the GPUs they run on, flys within the face of the closed-model establishment. You get to check out the large stuff, whilst evaluating it to what you’ll be able to already run in the community. And if you end up finished together with your checking out, you get to make an educated name about which mannequin is value your {hardware} funds or value paying for on OpenRouter earlier than you spend anything else in any respect.