Google says it’s increasingly more the use of its Gemini AI fashions to stumble on and block damaging commercials on its promoting platforms, as scammers and risk actors proceed to conform their techniques to evade detection.

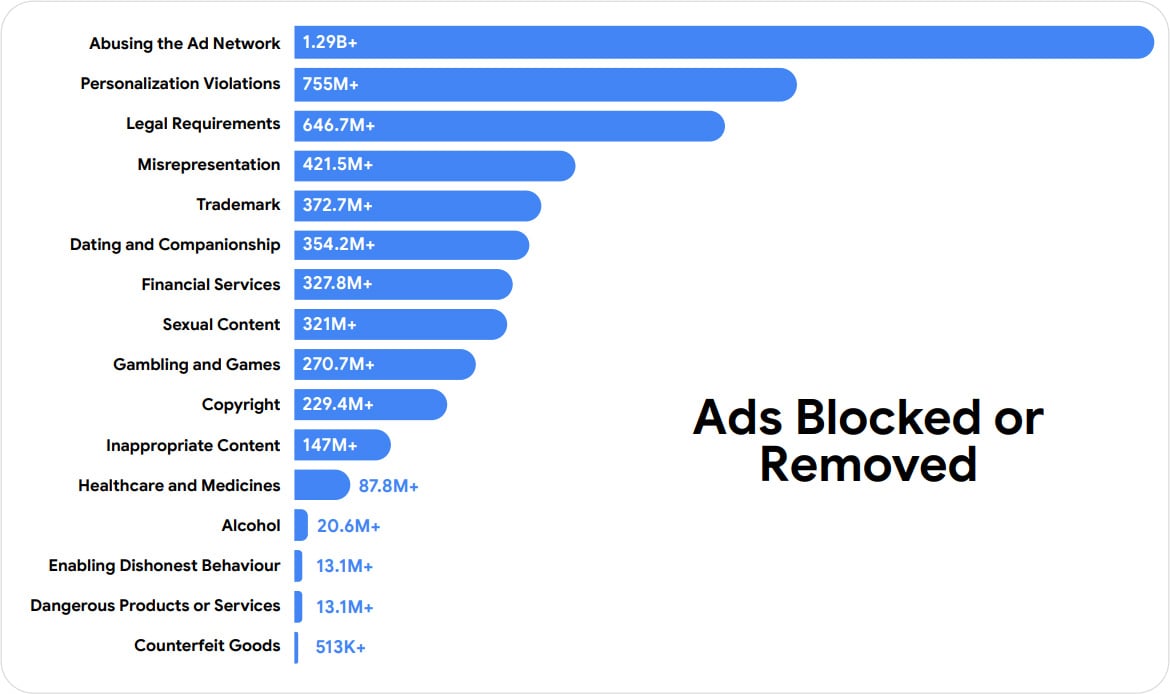

In a brand new submit, the corporate studies having blocked or got rid of 8.3 billion commercials and suspended 24.9 million advertiser accounts in 2025, together with 602 million commercials tied to scams.

Malvertising has been a long-standing downside on Google’s advert community, with attackers buying commercials that impersonate official manufacturers and services and products that push malware, scouse borrow cryptocurrency, or result in phishing websites.

Those promoting campaigns regularly make the most of cloaking tactics and URL redirects to look as depended on web pages, together with appearing Google’s personal domain names and the ones of official instrument obtain pages and authentication portals.

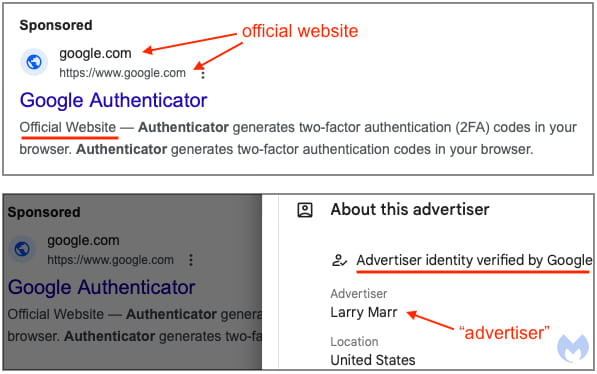

Contemporary campaigns reported by means of BleepingComputer come with faux login pages to scouse borrow Google Commercials accounts, distributing trojanized instrument thru commercials impersonating equipment like Google Authenticator and Homebrew, and exhibiting commercials for web pages posing as cryptocurrency platforms that drain guests’ cryptocurrency wallets.

Supply: Malwarebytes

Consistent with Google, cybercriminals at the moment are the use of generative AI in those campaigns, enabling them to construct extra subtle, larger-scale operations abruptly.

“Unhealthy actors are the use of generative AI to create misleading commercials at scale, and Gemini is helping us stumble on and block them in actual time. Via the top of closing yr, the vast majority of Responsive Seek Commercials created in Google Commercials had been reviewed in an instant, and damaging content material used to be blocked at submission — an ability we plan to deliver to extra advert codecs this yr,” explains Keerat Sharma, VP & Basic Supervisor, Commercials Privateness and Protection.

To protect by contrast, Google says it’s now depending closely on Gemini AI-powered techniques to automate the invention and blockading of malicious commercials ahead of they’re proven to customers.

Whilst earlier detection techniques analyzed key phrases for malicious habits, Google says Gemini can analyze billions of alerts, together with advertiser habits, account historical past, marketing campaign patterns, and intent, to resolve whether or not an advert is malicious.

In the USA, Google says it got rid of 1.7 billion commercials and suspended 3.3 million advertiser accounts in 2025, with “abusing the advert community” and “misrepresentation” being the highest two coverage violations.

Synthetic intelligence has enhanced Google’s reaction to malicious and rip-off commercials that slip in the course of the preliminary assessment procedure, permitting the corporate to procedure consumer studies a lot sooner than in earlier years.

Google has additionally stated the greater accuracy of its AI fashions has decreased fallacious advertiser suspensions by means of 80%.

The corporate says it is going to proceed increasing Gemini’s use throughout further advert codecs and enforcement techniques, aiming to dam malicious campaigns at submission time.

Computerized pentesting proves the trail exists. BAS proves whether or not your controls prevent it. Maximum groups run one with out the opposite.

This whitepaper maps six validation surfaces, displays the place protection ends, and offers practitioners with 3 diagnostic questions for any instrument analysis.