Unfastened open-source GEO tracker for LLM visibility | OneGlanse

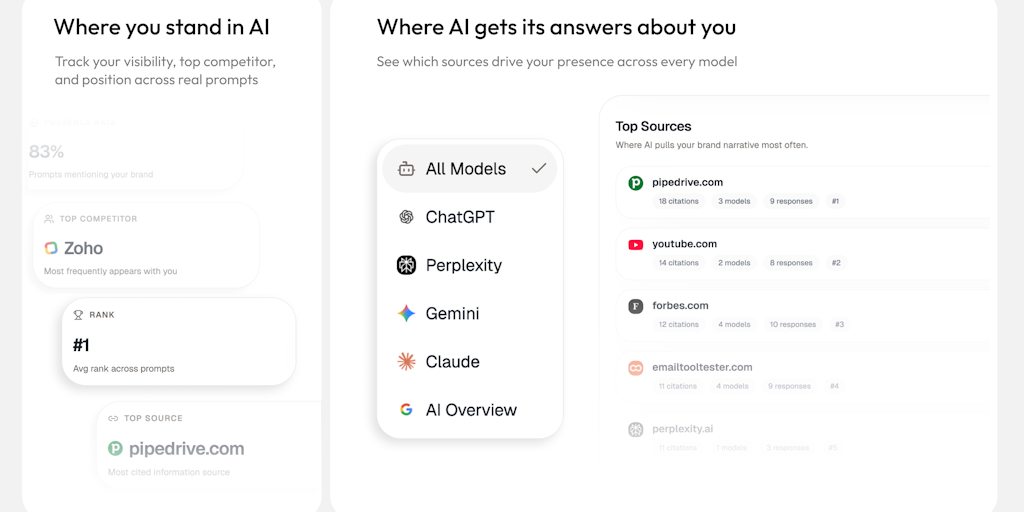

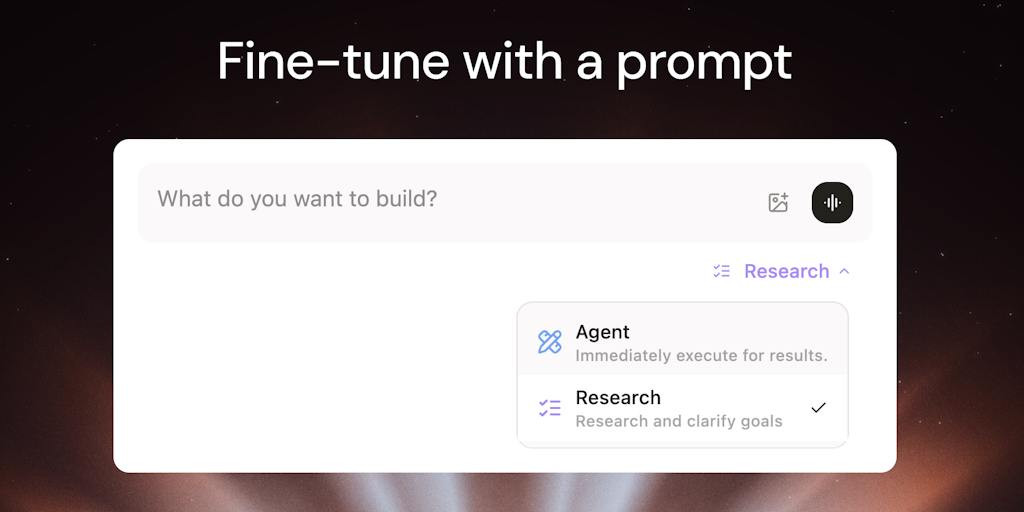

An absolutely unfastened, open-source GEO tracker that presentations how your emblem seems in actual AI responses. Observe visibility throughout ChatGPT, Gemini, Perplexity, Claude, and Google AI Review the use of actual UI outputs, now not APIs. Evaluate competition, analyze resources, and perceive positioning. Run in the neighborhood or self-hosted — your knowledge remains with you. […]

Unfastened open-source GEO tracker for LLM visibility | OneGlanse Read More »