As AI fashions more and more transform commoditized, startups are racing to construct the tool layer that sits on most sensible of them. One attention-grabbing entrant into this area is Osaurus, an open supply, Apple-only LLM server that we could customers transfer between other native AI fashions, both in the neighborhood or within the cloud, whilst conserving their information and gear all on their very own {hardware}.

Osaurus developed out of the speculation for a desktop AI significant other, Dinoki, which Osaurus co-founder Terence Pae described as a kind of “AI-powered Clippy.” Dinoki’s consumers had requested him why they must purchase the app in the event that they nonetheless needed to pay for tokens — the utilization gadgets AI firms fee for processing activates and producing responses.

That were given Pae considering extra deeply about operating AI in the neighborhood.

“That’s how Osaurus began,” Pae, up to now a tool engineer at Tesla and Netflix, instructed TechCrunch over a choice. The speculation, he defined, was once to take a look at to run an AI assistant in the neighborhood. “You’ll be able to do just about the whole thing to your Mac in the neighborhood, like surfing your information, gaining access to your browser, gaining access to your device configurations. I figured this might be an effective way to put Osaurus as a non-public AI for people.”

Pae started construction the instrument in public as an open-source undertaking, including options and solving insects alongside the way in which.

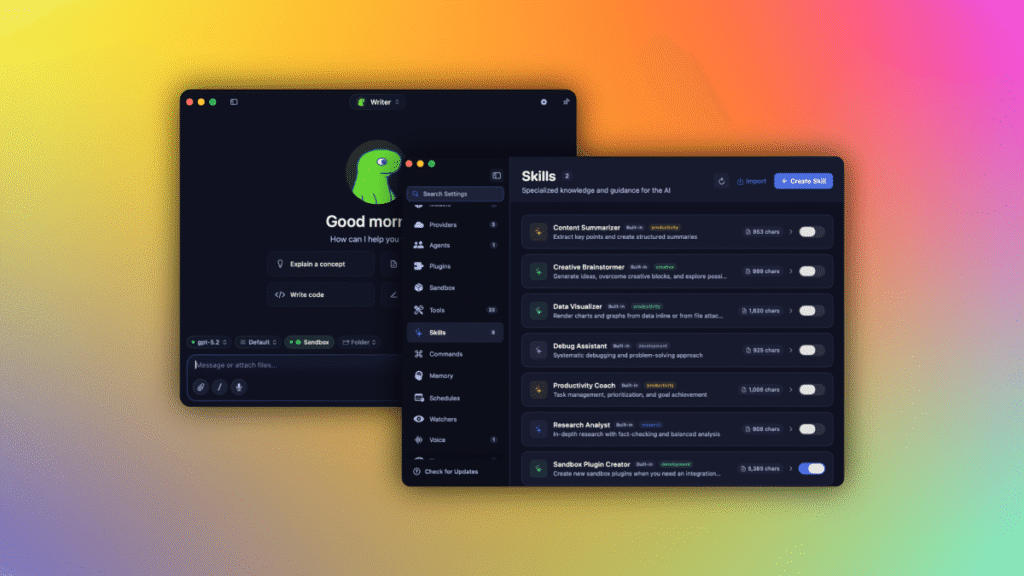

Nowadays, Osaurus can flexibly hook up with in the neighborhood hosted AI fashions or cloud suppliers like OpenAI and Anthropic. Customers can freely make a selection which AI fashions they’re the usage of, and stay different sides of the AI enjoy on their very own {hardware}, just like the fashions’ personal reminiscence, or their information and gear.

For the reason that other AI fashions have other strengths, the good thing about the program is that customers can transfer to the AI fashion that most closely fits their wishes.

This kind of construction makes Osaurus what’s referred to as a “harness” — a regulate layer that connects other AI fashions, gear, and workflows thru a unmarried interface, very similar to gear like OpenClaw or Hermes. Then again, the adaptation is that such gear are continuously geared toward builders who know their approach round a terminal. And occasionally, like when it comes to OpenClaw, they’ll pose safety problems and holes to fret about.

Osaurus, in the meantime, gifts an easy-to-use interface that customers can use, and addresses safety considerations via operating issues in a hardware-isolated, digital sandbox. This boundaries the AI to a definite scope, conserving your laptop and knowledge protected.

After all, the observe of operating AI fashions to your system continues to be in its early days, for the reason that it’s closely resource-intensive and hardware-dependent. To run native fashions, your device will want no less than 64 GB of RAM. For operating better fashions, like DeepSeek v4, Pae recommends programs with about 128 GB of RAM.

However Pae believes native AI’s wishes will come down in time.

“I will be able to see the possibility of it, for the reason that intelligence consistent with wattage — which is just like the metric for native AI — has been going up considerably. It’s by itself curve of innovation. Closing 12 months, native AI may slightly end sentences, however lately it may possibly in truth run gear, write code, get right of entry to your browser, and order stuff from Amazon […] it’s simply getting higher and higher,” he mentioned.

Osaurus lately can run MiniMax M2.5, Gemma 4, Qwen3.6, GPT-OSS, Llama, DeepSeek V4, and different fashions. It additionally helps Apple’s on-device basis fashions, Liquid AI’s LFM circle of relatives of on-device fashions, and within the cloud, it may possibly connect with OpenAI, Anthropic, Gemini, xAI/Grok, Venice AI, OpenRouter, Ollama, and LM Studio.

As a complete MCP (Fashion Context Protocol) server, you’ll give any MCP-compatible shopper get right of entry to on your gear as neatly. Plus, it ships with over 20 local plugins for Mail, Calendar, Imaginative and prescient, macOS Use, XLSX, PPTX, Browser, Track, Git, Filesystem, Seek, Fetch, and extra.

Extra lately, Osaurus was once up to date to incorporate voice features as neatly.

Because the undertaking went reside just about a 12 months in the past, it’s been downloaded north of 112,000 occasions, in step with its site.

Recently, Osaurus’ founders (who come with co-founder Sam Yoo) are collaborating within the New York-based startup accelerator Alliance. They’re additionally fascinated with subsequent steps, which might see Osaurus being introduced to companies, like the ones within the prison area or in healthcare, the place operating native LLMs may cope with privateness considerations.

As the ability of native AI fashions grows, the group believes it would decrease the call for for AI information facilities.

“We’re seeing this explosive enlargement within the AI area the place [cloud AI providers] must scale up the usage of information facilities and infrastructure, however we really feel like other folks haven’t in reality observed the price of the native AI but,” Pae mentioned. “As a substitute of depending at the cloud, they may be able to in truth deploy a Mac Studio on-prem, and it must use considerably much less energy. You continue to have the features of the cloud, however you are going to no longer be depending on an information middle so that you can run that AI,” he added.

Whilst you acquire thru hyperlinks in our articles, we might earn a small fee. This doesn’t have an effect on our editorial independence.