We, at XDA, completely love native LLMs. That “we” did not in point of fact come with me for the longest time as a result of I used to be completely glad letting cloud-based fashions do the entire heavy lifting. Why combat with quantized weights and a fiddly setup when the consequences would all the time really feel like a downgrade from what a cloud style arms you without spending a dime? So, after testing an area LLM and being disillusioned, I let the primary influence be my closing for a protracted, very long time.

Then again, seeing how a lot the opposite other people at XDA rave about native LLMs made me really feel like I used to be lacking one thing. So, I in the end gave one any other shot. This time, as a substitute of operating a style on my MacBook Air with its embarrassing 8GB of RAM, I made up our minds to run one immediately on my telephone. It was once way more helpful than I had any proper to be expecting.

You are not looking for beefy {hardware} to run Gemma 4 fashions

Now not like different native fashions

The explanation why native LLMs have not in point of fact appealed to me is extra so my fault, and it is that I have been looking to run them on {hardware} that they don’t seem to be in point of fact designed for. The best way an LLM works is that while you ask ChatGPT one thing, your instructed will get despatched to a huge style sitting on tough servers someplace in a knowledge middle.

Need to keep within the loop with the most recent in AI? The XDA AI Insider publication drops weekly with deep dives, instrument suggestions, and hands-on protection you will not to find anyplace else at the web site. Subscribe by means of enhancing your publication personal tastes!

With an area LLM, a whole style has to suit to your software as a substitute. This comprises all of its style’s skilled weights (necessarily recordsdata containing the whole thing the style has realized) and parameters, stuffed right into a report sufficiently small to your software’s reminiscence to take care of. Traditionally, the trade-off has all the time been high quality and pace. Then again, AI firms had been running time beyond regulation to deal with precisely this, and Google’s Gemma 4 is without doubt one of the perfect examples of that effort paying off.

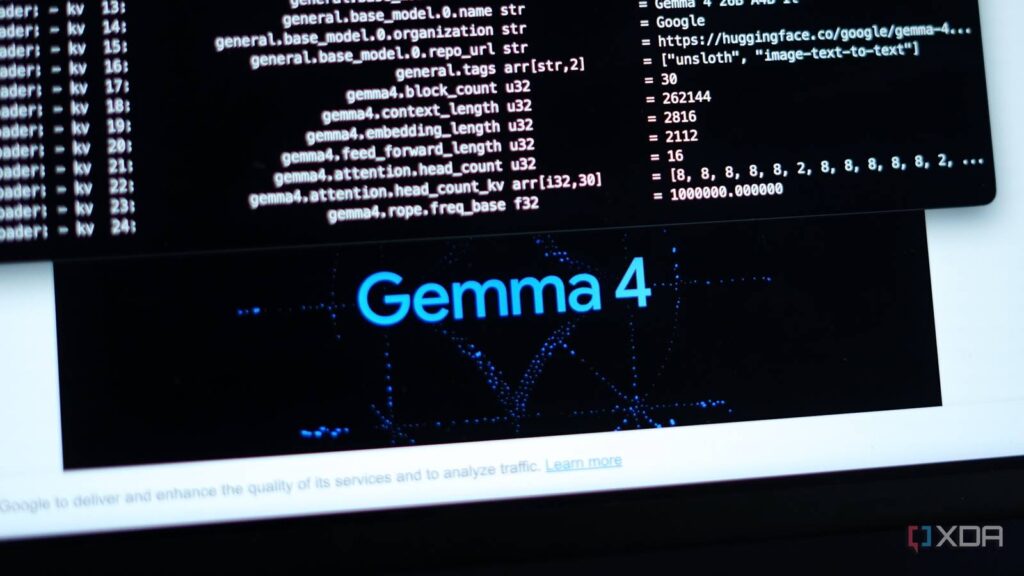

Gemma 4 is Google’s latest circle of relatives of open-source AI fashions constructed at the similar structure of Gemini 3, and it is composed of fashions in 4 other sizes: E2B and E4B for telephones and edge gadgets, a 26B mixture-of-experts style, and a complete 31B dense style. The most efficient section about those fashions is that Google has deliberately engineered them to squeeze extra intelligence out of every parameter. LLM parameters are necessarily the settings that keep an eye on and optimize its output and behaviour.

Historically, extra parameters translate to higher effects. Then again, it additionally supposed that you would want extra {hardware} to run them. Gemma 4 flips this by means of getting smarter output from fewer parameters! In more effective phrases, this implies you are getting responses that really feel like they are coming from a bigger style with no need the {hardware} to run one.

You’ll be able to run Gemma E2B and E4B to your telephone without spending a dime

Obtain, set up, and pass

Two of the fashions from the Gemma 4 circle of relatives, E2B and E4B, are optimized to run easily on gadgets like your telephone or computer. For the reason that native fashions run by yourself {hardware}, they are totally unfastened to make use of, and your knowledge remains totally to your software. So, when you have a quite trendy telephone, you have got no reason why now not to take a look at it. You have got a couple of choices to run Gemma 4 to your telephone.

First up, you’ll be able to use Google’s AI Edge Gallery app, which is totally unfastened to obtain and to be had on each iOS and Android. You’ll be able to additionally use In the neighborhood AI on iPhone, iPad, and Mac. The app permits you to run Llama, DeepSeek, Qwen, and Gemma fashions in the community, and is optimized for Apple Silicon. I have been in my view the use of the Google AI Edge Gallery app, and it is been beautiful clean.

Without reference to which course you pass, the setup is really so simple as it will get — you obtain the style you would like to make use of offline (on this case, Gemma-4-E2B-it or Gemma-4-E4B-it) and that is the reason about it. The previous calls for 2.5GB to put in, while the latter calls for 3.61GB. I have been the use of the Gemma-4-E2B-it style on my iPhone 15 Professional Max, and smartly, I am really inspired by means of how a lot it may take care of.

Gemma 4 has changed cloud fashions for my on a regular basis AI duties

The duties that stay me coming again

I may well be a author for publications which can be all the time on the whims of Google, however I am not going to misinform you and say I nonetheless Google each and every little query that pops into my head. Like most of the people, I have been turning to AI as a substitute. The accuracy of the reaction is any other tale, however it is not a nasty strategy to get a handy guide a rough solution whatsoever. In a similar way, like a large number of other folks, a vital bite of the duties I take advantage of AI for are beautiful surface-level. Suppose fast textual content cleanups, drafting an electronic mail, or explaining one thing I do not slightly get. I am additionally a pupil, and AI indisputably has a large function in my studying procedure in this day and age. I am all the time asking it to damage down advanced ideas, quiz me on subjects, stroll me via issues step-by-step, or give an explanation for one thing my professor glossed over in 5 seconds (generally when I am in school of mentioned professor).

None of those duties want flagship AI fashions like Opus 4.7 and GPT 5.4. Extra importantly, none of them want a server 1000’s of miles away to procedure your request or an web connection. Gemma 4 is very good in any respect of those duties, and it is been what I have been the use of completely for those on a regular basis duties since I put in it. Gemma 4 additionally is not restricted to only chat. In the course of the AI Edge Gallery app, you’ll be able to use Ask Symbol to spot items or learn textual content from footage, Audio Scribe for speedy offline voice transcriptions, Agent Abilities that allow the style use equipment like Wikipedia and maps, or even a Recommended Lab for fine-tuning how the style responds. And once more, the entire above runs totally to your telephone!

I feel sorry about drowsing on native LLMs for so long as I did

Now, I am by no means form or shape pronouncing that Gemma 4 goes to switch ChatGPT, Claude, or Gemini for the whole thing. It may not. But additionally, it does not in point of fact want to! It simply has to take care of the light-weight on a regular basis stuff, and it does that greater than smartly sufficient. Seems, a 2.5GB style to your telephone and not using a web is much more helpful than you’ll assume.