Ever since I jumped headfirst into the native LLM rabbit-hole, I’ve grown keen on revitalizing previous PCs by means of turning them into dependable AI workstations. With the proper tweaks, I’ve even controlled to run tough LLMs that may rival their cloud-based opposite numbers on one thing as old-fashioned as a 10-year-old rig. That mentioned, maximum of my hardcore LLM experiments contain full-fledged x86 gaming programs with devoted graphics playing cards and extra RAM.

That mentioned, a Raspberry Pi 5 can deal with as much as 4B fashions with out buckling underneath the additional load, which makes it an incredibly respectable choice for internet hosting embedding fashions and easy chatbots. However since I sought after to run fashions that wouldn’t differently are compatible in this SBC, I figured I may just take a look at clustering some spare forums. And neatly, it’s more than likely probably the most cursed initiatives I’ve labored on (however it nonetheless has some sliver of software).

I changed ChatGPT and Claude with this tough native LLM and stored over $20 a month whilst gaining complete regulate

Qwen3.6 runs on my previous GPU and does what ChatGPT does at no cost

Development a llama.cpp cluster wasn’t that onerous

I needed to bring together the inference engine on each programs, although

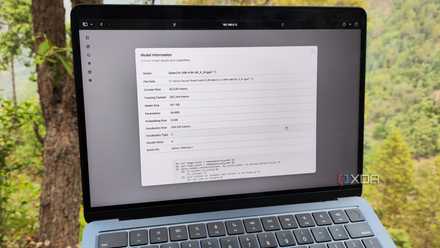

Beginning with the SBCs that I sought after to make use of because the guinea pigs individuals for this undertaking, I’d first of all deliberate to spin a cluster out of 3 units. Alternatively, I temporarily discovered that almost all of my ARM forums have been already engaged in some experiment or any other, leaving a Raspberry Pi 5, Libre Laptop Alta, and Le Frite as the one viable choices. Sadly, the Le Frite is very vulnerable for this undertaking, and its USB 2.0 socket and 100M would finally end up bottlenecking an already feeble setup. So, I went with a 2-node cluster involving a Raspberry Pi 5 (8GB) and a Libre Laptop Alta (4GB), with an RPC backend on llama.cpp splitting the inference duties between the 2 programs.

Thankfully, the setup procedure used to be so much more practical than I’d expected, despite the fact that I needed to bring together llama.cpp from scratch. When I’d armed each programs with a CLI distro (an older model of Ubuntu on Alta and Raspberry Pi OS Lite on you-know-what) and configured openssh-server, I logged into them by the use of PuTTY and put in the pre-requisite programs by means of working sudo apt set up -y git build-essential cmake pkg-config. Then, I cloned the llama.cpp repo with git clone https://github.com/ggml-org/llama.cpp.git and switched to its freshly created listing by the use of the cd llama.cpp command. After all, I created but any other folder referred to as build-rpc by the use of mkdir -p build-rpc prior to switching to it and executing the next instructions to bring together llama.cpp with RPC functions:

cmake .. -DGGML_RPC=ON -DCMAKE_BUILD_TYPE=Unlock

cmake --build . --config Unlock -j$(nproc)

Since I sought after the Alta SBC to behave because the secondary server rig, I ran ./bin/rpc-server -H 0.0.0.0 -p 50052 on it and let the RPC server stay energetic for some time. After the usage of the SCP command to transport some LLMs from my major PC to the Raspberry Pi node, I ran the ./bin/llama-server -m /house/ayush/fashions/Qwen3.5-2B-Q4_K_M.gguf –rpc 192.168.0.150:50053 –host 0.0.0.0 –port 8080 command and waited for it to complete loading the type.

Seems, the cluster’s efficiency used to be worse than a standalone SBC

I blame sluggish community provisions for this bottleneck

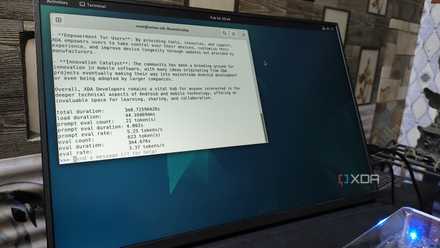

Since I used to be the usage of the quite light-weight Gemma 3 4B, I anticipated my cluster to accomplish relatively higher than simply my Raspberry Pi. Alternatively, working a few activates by the use of the llama-server’s internet UI proved differently. And I’m now not speaking about advanced activates or inference duties involving MCP servers, both. For one thing so simple as “Inform me one thing cool,” the cluster would battle to hit 2.20 tokens/2nd. So, I restarted my Raspberry Pi, and ran the llama-server command as soon as once more. Apart from, I removed the — rpc flag this time. Positive sufficient, the inference engine controlled to hit 4.37 t/s, which is nearly two times as speedy because the clustered setup!

In principle, the cluster will have to both hit upper token era charges, or, on the very least, supply speeds similar to a Raspberry Pi-only setup. However it makes absolute best sense after I issue the community and garage bottlenecks into the equation. You spot, each SBCs function a 1GbE connection, which is relatively sluggish for high-speed AI inference duties. Worse nonetheless, I’d run out of SSDs in my house lab, so I needed to make do with mere microSD playing cards, which for sure give a contribution to the rate issue (or the dearth thereof). Toss in the truth that LLM operations are very delicate to latency, and it’s transparent why my cluster plays extraordinarily. I used to be about to label this undertaking a failure and wrap issues up right here, however I sought after to check out one ultimate experiment prior to dissolving the cluster…

Ollama continues to be one of the best ways to begin native LLMs, however it is the worst method to stay working them

Ollama is excellent for purchasing you began… simply do not stick round.

However the cluster can run LLMs that might differently be too heavy for my RPi

Simply don’t have a look at the token era speeds

Whilst its lackluster efficiency used to be a complete buzzkill, my major purpose in the back of this wacky undertaking used to be to run massive fashions {that a} Raspberry Pi with simply 8GB of RAM wouldn’t have the ability to host. So, I spun llama-server up as soon as once more with out the RPC flag and started running my approach up the type parameter measurement. Qwen 3.5 (9B) is the place llama-server crashed, because the SBC couldn’t accommodate the huge type.

But if I ran it with the RPC flag pointing to the Alta, llama-server used to be ready to load the LLM with relative ease. Simply to meet my interest, I opened the internet UI and started prompting the LLM. Smartly, it for sure labored, although it will solely generate 1.27 tokens each 2nd. That’s nowhere close to a possible quantity for my productiveness duties and coding workloads. However it’s nonetheless relatively usable for automatic duties like producing tags for bookmarks or acting OCR scans on paperwork, particularly taking into account that I will simply go away my SBCs working all day with out being worried about their power intake.

And to be brutally truthful, I figured I’d finally end up measuring seconds according to token as a substitute of tokens according to 2nd. So, a token era price of one.27 t/s is relatively unexpected, particularly because it’s a type that my SBC couldn’t even load within the first position. Whilst I more than likely wouldn’t use this cluster for SBC inference duties, RPC for sure sounds helpful. Actually, I may simply take a look at the usage of it for my present LXC-based LLM-hosting workstations, which function full-fledged 10G NICs.