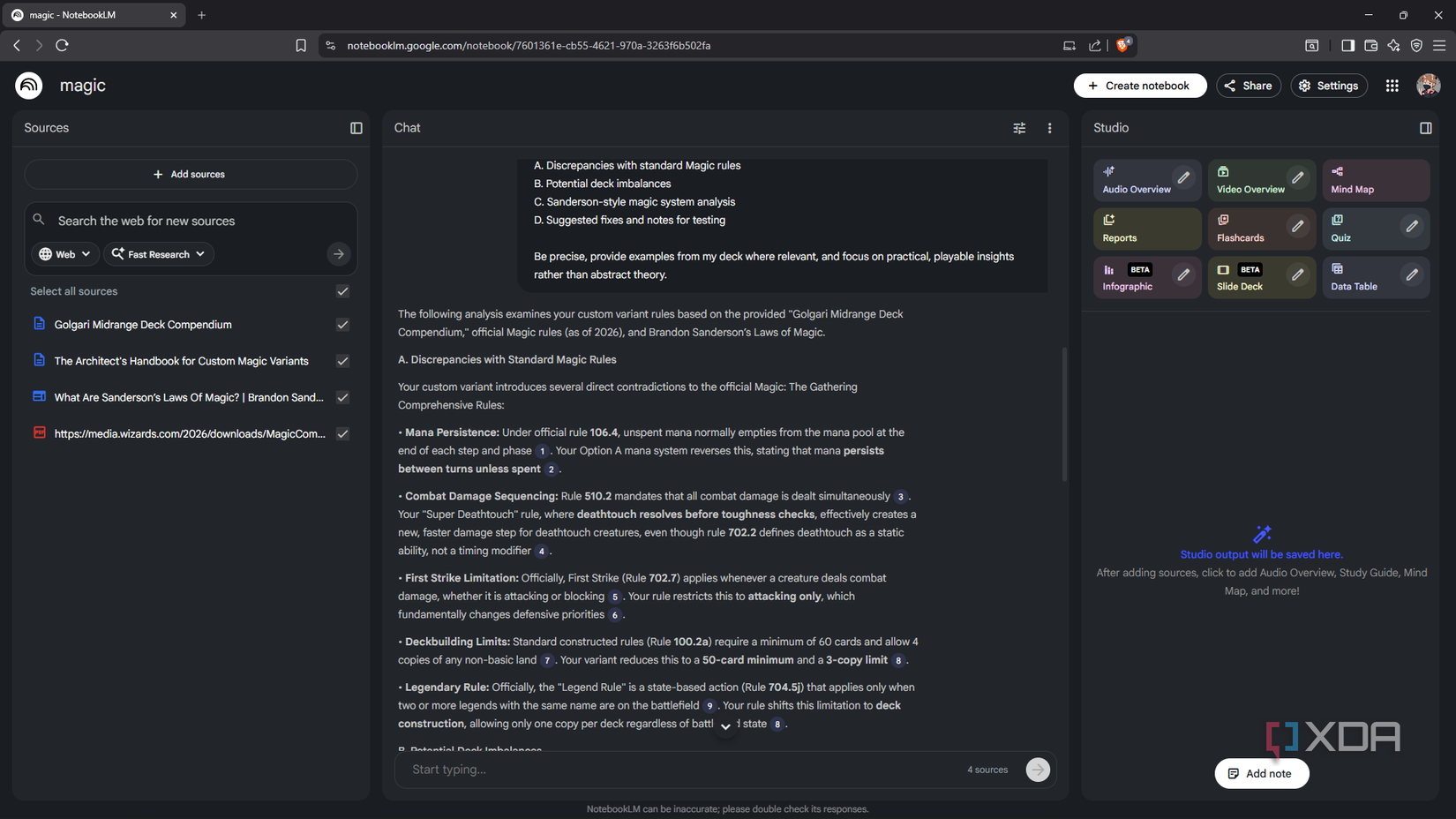

NotebookLM is truly one of the most higher AI equipment I exploit, and I’ve been on it lengthy sufficient to have an actual opinion on it. How it remains grounded to your resources, the quotation habits, the interactive thoughts maps are all one of the most beneficial options in my workflow – not anything else does what it does on the identical stage, and it’s loose, which all the time issues.

The object is, it’s a Google product. Your information pass to their servers, get processed through their infrastructure, take a seat to your account till you delete them. Google is beautiful in advance that your content material doesn’t educate their fashions – and so far as I will be able to inform, they imply it – however that’s no longer the similar as the ones information no longer current someplace on Google’s servers in any respect. For many paintings, utterly tremendous. When it isn’t tremendous is when the paperwork get private.

4 causes Open Pocket book is the most efficient self-hosted NotebookLM selection

No want to proportion your analysis information with Google anymore

Why even ditch NotebookLM when it’s so just right?

When cloud AI stops being the appropriate name

Consistent with Google’s personal documentation, NotebookLM gained’t use your uploaded resources to without delay educate its foundational fashions – until you publish comments, at which level that interplay, your content material incorporated, turns into reviewable. Your queries aren’t stored. However uploaded fabrics, generated outputs, and chat historical past are all retained for so long as the pocket book exists. Utilization metadata – how regularly you get right of entry to the instrument, which options you employ – falls underneath same old Google product phrases regardless. And the sensible fact is that your paperwork are being processed server-side through Google’s infrastructure. For a private Google account, that’s simply how the product works.

I had a health-related take a look at carried out lately and were given an in depth document with a large number of knowledge, a few of which I didn’t know the way to interpret, a few of which was once only a lot to learn thru. Naturally, I sought after to scan thru it and get a greater review of what was once occurring. On the other hand, I were given just a little pause when I used to be about to add it to NotebookLM; I had simply watched some Reels on information privateness earlier than this came about and couldn’t shake the nagging feeling of all my fitness information being out of my palms. So I finished up attaining for my native LLM as a substitute. Privateness is likely one of the major causes I put in one anyway.

I do not pay for ChatGPT, Perplexity, Gemini, or Claude – I persist with my self-hosted LLMs as a substitute

There is no level in depending on AI equipment when my native LLMs can care for the entirety

How my native setup handles paperwork

And 3 ways to succeed in for info

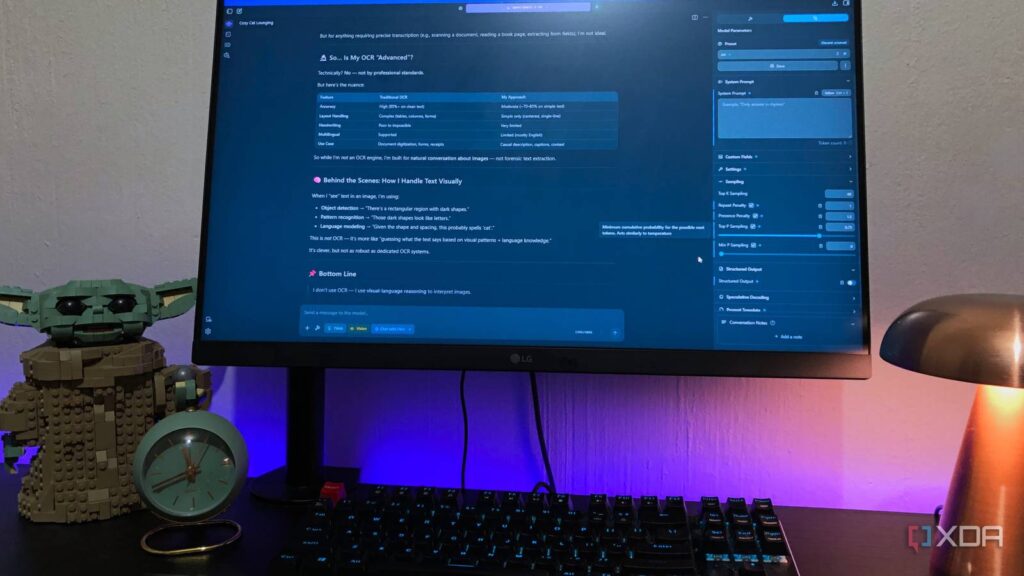

LM Studio has had integrated report give a boost to since model 0.3.0, launched in mid-2024, and how it handles that is beautiful smart. Connect a record to a talk and it first tests whether or not the report suits throughout the type’s energetic context window. If it does, the entire thing will get injected without delay into the advised – no retrieval in any respect if truth be told, simply complete content material passed to the type in a single pass. If the report is just too lengthy for that threshold, it switches to RAG: the report will get chunked, each and every section will get embedded, and while you ship a question it pulls probably the most semantically related items and drops the ones into the advised. The type responds according to no matter were given retrieved.

In fact, the place it’s a distinct enjoy from NotebookLM is that the type nonetheless has all of its coaching information. NotebookLM is source-grounded through design, which is a characteristic and all of the level. However infrequently you don’t need that boundary. When I used to be running thru my genetic document, I if truth be told didn’t need the type to simply repeat again what was once written. I sought after it to attach the ones values to scientific context, give an explanation for what a marker generally way out of doors the report, or pull in reference levels it already knew. That stretch calls for a type with its personal wisdom. And with my Courageous Seek MCP hooked up, it may pull from the internet mid-conversation when one thing must be present. So I’ve RAG on my report, the type’s coaching wisdom, and are living internet get right of entry to in the similar consultation – with no need to tool-hop any place.

The type I’m operating is Qwen 3.5 9B, which dropped in early March 2026. The explanation it really works neatly on my 8GB GPU is architectural – Qwen 3.5 makes use of Gated Delta Networks (GDN), which helps to keep the KV cache footprint considerably smaller than maximum fashions at this measurement, so I will be able to push the context period up in LM Studio previous the default with out it in an instant hitting a wall. With regards to prompting, native fashions reply significantly better to particular directions than cloud fashions do. They don’t infer context as neatly, so “analyze the next report and flag any values out of doors conventional reference levels, give an explanation for each and every one in undeniable language” will outperform a obscure query each and every time.

The truthful case for maintaining NotebookLM round

The tradeoffs I will be able to’t fake don’t exist

NotebookLM runs on Gemini with a context window that may hang as much as 1,000,000 tokens in line with supply – kind of 750,000 phrases, the identical of a number of very lengthy books loaded concurrently and held in view directly. For comparability, LM Studio most effective permits 5 report uploads directly with a blended measurement of as much as 30MB. Even with context period driven up and GDN decreasing the reminiscence load, I’m running with a fragment of NotebookLM’s ceiling, and when paperwork get lengthy, chunking takes over.

RAG chunking works through scoring report segments in opposition to your question and surfacing probably the most related ones – which is okay till the solution you want occurs to are living in a piece that didn’t rating neatly in opposition to the precise phrases you used. NotebookLM in large part sidesteps this as it holds such a lot in context concurrently that retrieval misses are a ways much less commonplace. For extraordinarily lengthy paperwork like years of lab effects blended right into a unmarried record, a long contract, or a complete clinical historical past, NotebookLM is the extra dependable instrument (in relation to context) and I wouldn’t fake differently.

In the event you’d reasonably keep native even for longer paperwork, probably the most sensible factor is to separate them up earlier than you get started. Feed sections reasonably than all of the record, ask centered questions in line with segment. You’ll use a self-hosted instrument like OmniTools for this, so the workflow nonetheless stays native.

I became to NotebookLM to arrange a self-hosted LLM — here is the way it went

Can NotebookLM information me to my first self-hosted LLM?

Some paperwork belong in your gadget

NotebookLM isn’t going any place for me – I nonetheless use it repeatedly for analysis, paintings paperwork, studying stacks, anything else the place cloud garage isn’t a priority. However some paperwork simply aren’t issues I need to hand to Google, and my native LLM has been higher for the ones than I anticipated. The RAG isn’t easiest and the context ceiling is actual, however to me that’s no longer a dealbreaker – it’s only a other roughly instrument for a distinct roughly report.